The Great Reversal: How Big Tech Became the Spam Kings They Once Conquered

Silicon Valley's crusade against internet junk has morphed into the very problem it promised to solve.

Casey Morris, an attorney in Northern Virginia, used to trust Facebook's algorithms. Now she scrolls through a feed populated by AI-generated grandmas, impossible log cabins, and synthetic family photos that fool thousands into engagement. "Facebook has become a very bizarre, very creepy place for me," Morris told NPR in 2024, capturing what millions of users now experience daily.

This isn't just another tech annoyance. It's the completion of Silicon Valley's greatest irony: the same companies that built their empires fighting spam have become history's most sophisticated spam creators. Google, Meta, and their peers now profit from flooding the internet with AI-generated "slop" while their own algorithms struggle to contain the mess they've unleashed.

The Anti-Spam Crusaders Turn Creators

In the early 2000s, Big Tech positioned itself as the internet's guardian against unwanted content. Google's PageRank algorithm promised to surface quality over quantity. Facebook's News Feed filtered signal from noise. These companies didn't just fight spam—they made it their founding mythology.

Google's spam team became legendary in tech circles. They pioneered machine learning techniques specifically to identify and suppress low-quality content. Email providers like Gmail made spam filtering a core competitive advantage. The message was clear: Silicon Valley would protect users from the internet's worst impulses.

Today, those same companies have reversed course entirely. Google's December 2024 spam update targeted sites using "scaled content abuse"—mass-produced AI articles flooding search results. But here's the twist: Google itself provides the tools enabling this flood through its AI APIs and cloud services. The company simultaneously profits from AI content generation while punishing sites for using it.

Meta's transformation is even more stark. Facebook's feed now actively promotes AI-generated images that generate high engagement, regardless of authenticity. The platform's algorithms have learned that synthetic content—fake babies, impossible animals, emotional manipulation—drives clicks and ad revenue better than authentic posts from friends and family.

The Economics of Digital Slop

The term "slop" has emerged as the perfect descriptor for this new category of content. Unlike traditional spam, which was obviously unwanted, slop occupies a gray area. It's AI-generated material that looks legitimate enough to fool casual viewers but lacks any human creativity or genuine purpose.

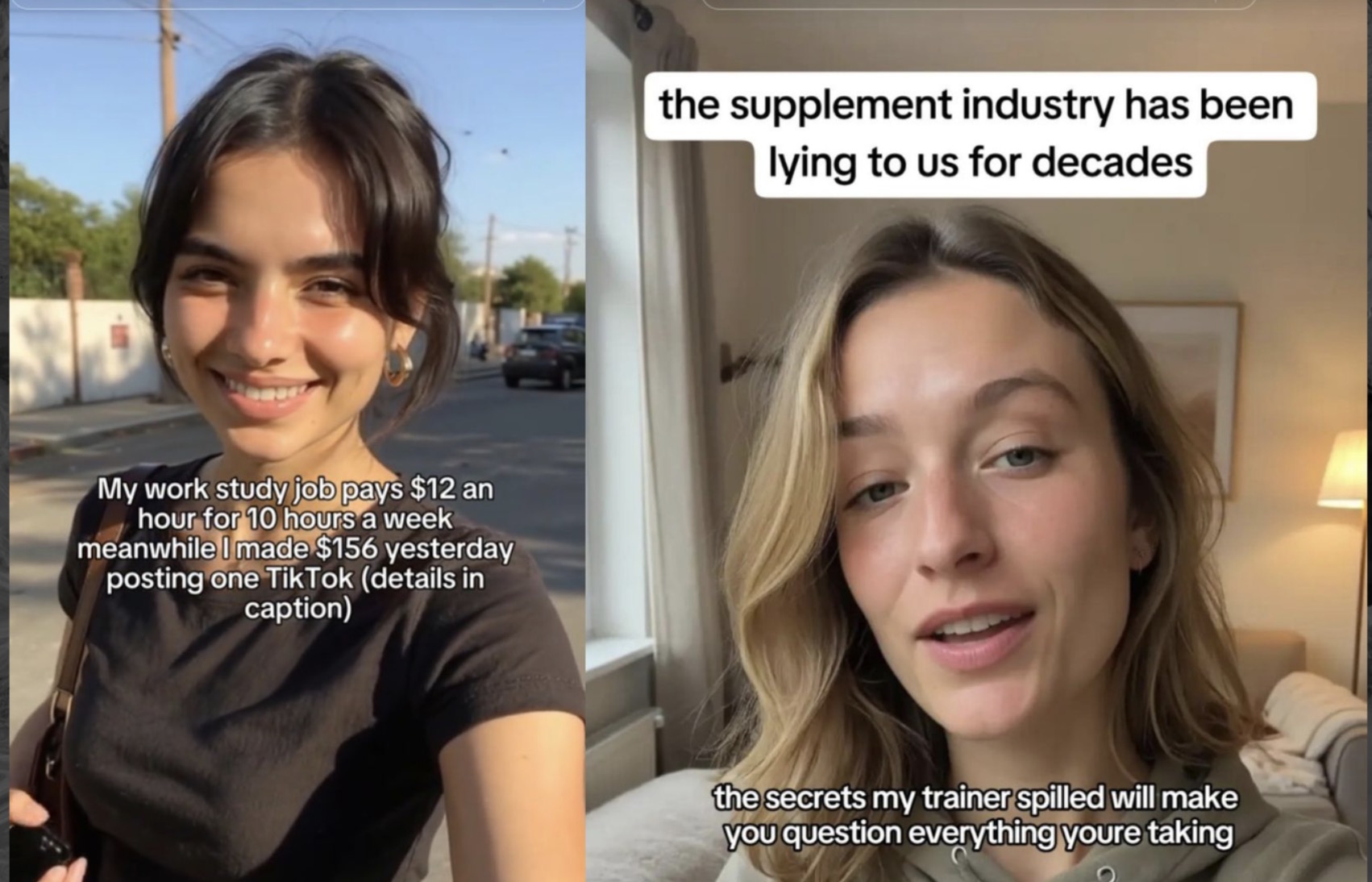

Slop creators understand a simple equation: production costs approach zero while potential revenue remains positive. A single person can now generate thousands of articles, images, or videos daily using AI tools. Even a tiny engagement rate across massive volume generates meaningful income through ad revenue, affiliate commissions, or social media monetization programs.

"Just like spam, almost no one wants to view slop, but the economics of the internet lead to its creation anyway. The lost time and effort of users who now have to wade through slop to find actual content far outweighs the profit to the slop creator."

Major platforms enable this economy through their creator funds and monetization programs. YouTube's Partner Program, Facebook's Creator Bonus, and similar initiatives provide direct financial incentives for content volume over quality. AI makes it possible to achieve the scale these programs reward without corresponding human effort or creativity.

The numbers are staggering. Research indicates that AI-generated content now comprises an estimated 15-20% of all new web pages indexed by Google. Social media platforms report similar infiltration rates, with some categories—like lifestyle and wellness content—seeing even higher percentages of synthetic material.

The Algorithm's Appetite for Artificial

Silicon Valley's algorithms have developed an unexpected preference for AI-generated content. This isn't intentional—it's an emergent property of optimization systems designed to maximize engagement.

AI-generated material is often optimized from creation for algorithmic consumption. It uses proven engagement triggers, optimal posting times, trending keywords, and emotional manipulation techniques. Human creators, constrained by authenticity and creative limitations, can't compete with content designed specifically to game recommendation systems.

Google's internal documentation reveals that human raters are now instructed to score obvious AI content at the lowest quality levels. Yet the company's own search algorithm continues promoting this material when it generates clicks. The disconnect between stated policy and algorithmic behavior exposes the fundamental tension in Big Tech's approach.

Social media platforms face the same contradiction. They publicly discourage inauthentic content while their engagement-driven algorithms systematically reward it. The result is an arms race between detection systems and generation techniques, with user experience caught in the crossfire.

The Hypocrisy Tax

The most damaging aspect of this transformation isn't just the content quality decline—it's the erosion of trust in digital platforms. Users who once relied on Google for authoritative information or Facebook for genuine social connection now approach these platforms with justified skepticism.

This skepticism creates what economists might call a "hypocrisy tax"—the additional cognitive effort users must expend to distinguish authentic from synthetic content. Every search result requires verification. Every social media post demands scrutiny. The platforms that promised to organize the world's information have instead created an environment where information itself becomes suspect.

Tech companies acknowledge this problem in their marketing materials while perpetuating it through their business models. Meta's transparency reports highlight efforts to combat "inauthentic behavior" while the company simultaneously develops more sophisticated content generation tools. Google warns about "low-quality content" while advertising its AI writing assistants to content creators.

The financial incentives remain perfectly aligned with slop production. Advertising revenue flows regardless of content authenticity. Cloud computing services profit from AI processing demands. Developer tool subscriptions grow with each new generation of synthetic content creators.

The Path Forward

Breaking this cycle requires acknowledging that the current internet economy fundamentally incentivizes content pollution. Advertising-supported platforms will always optimize for engagement over authenticity. AI tools will continue improving while detection methods lag behind.

Some promising developments emerge from unexpected sources. Subscription-based platforms like Substack and Patreon create direct creator-audience relationships that reduce reliance on algorithmic distribution. Community-moderated spaces like Reddit maintain higher content quality through human oversight. These models suggest alternatives to the attention-harvesting approach that enables slop proliferation.

Regulatory intervention appears inevitable. The European Union's AI Act includes provisions for synthetic content labeling. Several U.S. states have proposed similar requirements. But regulation typically trails technology by years, and enforcement across global platforms remains challenging.

The most effective solution may be market-driven. Users are beginning to migrate toward platforms that prioritize authentic human content. Creators are finding success by explicitly rejecting AI assistance. Brands are starting to differentiate themselves through verified human creation.

Silicon Valley's great reversal from spam fighter to spam creator represents more than corporate hypocrisy—it reveals the inherent tensions in building business models around human attention. The companies that once promised to connect us and inform us have discovered that synthetic engagement can be more profitable than authentic interaction. The question isn't whether this trend will continue, but whether users will accept a digital future where artificial content becomes the norm rather than the exception.

For now, Casey Morris and millions like her continue scrolling through feeds filled with AI-generated deception, searching for the authentic human voices that once made social media worth their time. The irony would be amusing if it weren't so damaging to the digital commons we all share.