Apple Just Handed Your Siri Data to Google. Here's Why That's Not the Story.

The real privacy scandal isn't what you think it is.

Apple pays Google $1 billion to outsource Siri's brain to Gemini AI. Your iPhone's most intimate conversations—the embarrassing voice searches, personal reminders, private questions you'd never ask aloud—now flow through Google's servers. The company that built a trillion-dollar empire on "privacy first" messaging just signed a multi-year deal to share your data with the world's largest advertising company.

Except that's not actually what happened. The real story is far more complex, and far more revealing about the future of privacy in the age of AI.

The Technical Reality Behind the Headlines

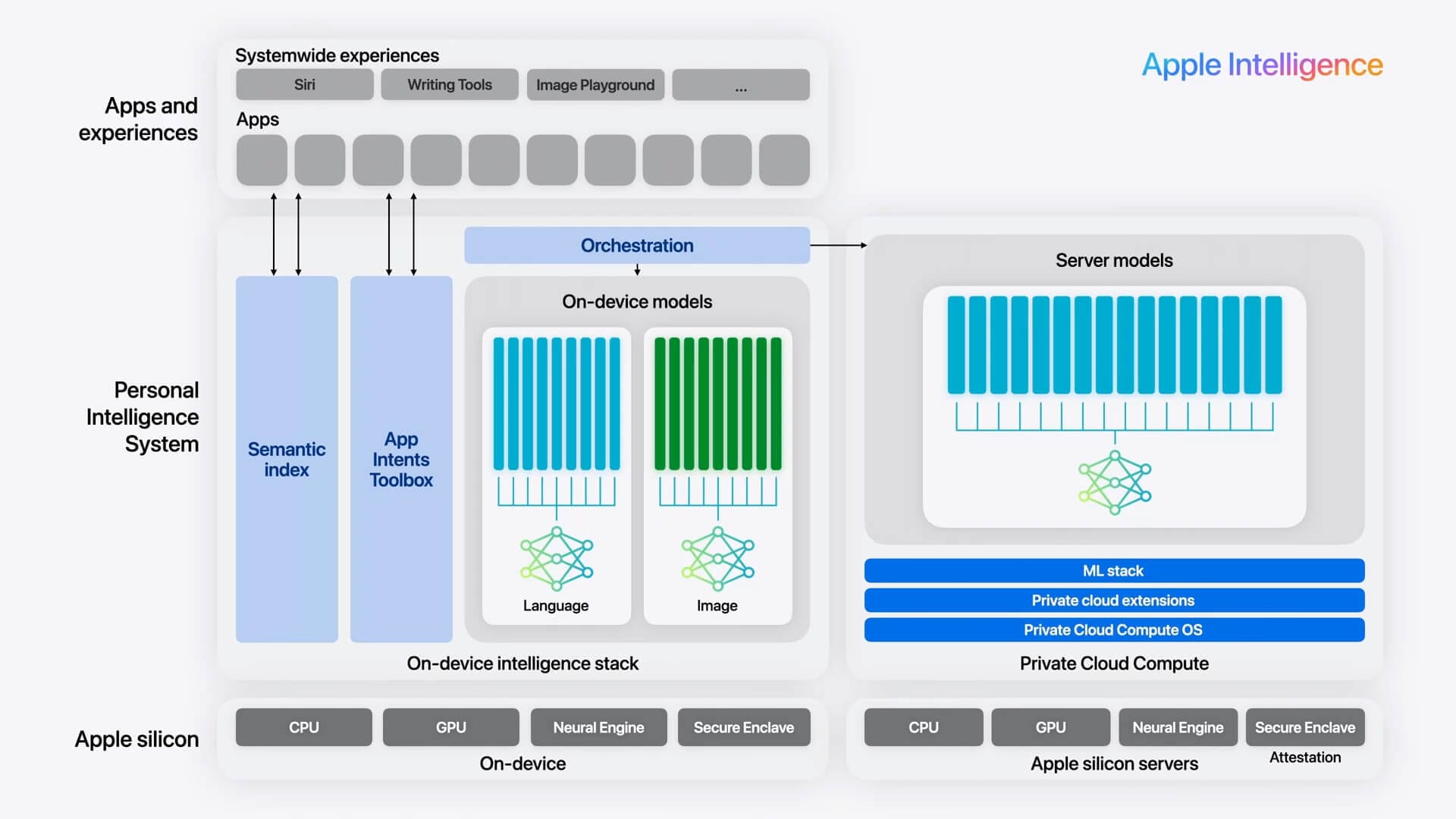

Apple isn't simply shipping your Siri requests to Google's data centers. The partnership operates through Apple's Private Cloud Compute infrastructure, where a customized version of Gemini runs on Apple-controlled servers. When you ask Siri something complex, your request gets encrypted on your device, processed within Apple's secure enclave using Google's AI models, and the results return to you without Google ever seeing the raw data.

Think of it like hiring a consultant who works in your office, using your equipment, under your supervision. Google provides the expertise, but Apple maintains the environment and controls access.

The distinction matters enormously. Google's standard Gemini service collects user interactions to improve its models and inform its advertising algorithms. This Apple implementation strips away those data collection mechanisms entirely. Google gets paid upfront instead of harvesting user data for future monetization.

"Apple is building its next-generation Foundation Models on Google's Gemini AI and cloud technology, but the model will run on Apple's Private Cloud Compute servers to ensure user data security and full control."

But here's where it gets interesting: this technical architecture reveals something profound about Apple's privacy strategy that most observers are missing entirely.

The Privacy Paradox Apple Actually Solved

For years, Apple faced an impossible choice. Build competitive AI in-house and lag behind Google and OpenAI by years, or partner with external AI companies and compromise user privacy. Every major AI breakthrough required massive data collection and cloud processing power that Apple simply couldn't match while maintaining its privacy standards.

The Gemini partnership solves this paradox through what industry experts call "privacy-preserving AI licensing." Apple gets access to cutting-edge AI capabilities without sacrificing user data. Google gets a billion-dollar payday without ongoing data access. Users get smarter AI without surveillance.

This isn't Apple abandoning privacy. It's Apple proving privacy-first AI is commercially viable at scale.

Consider the alternative: Apple could have continued developing AI internally while competitors pulled ahead with superior voice assistants, image generation, and predictive features. Users would eventually migrate to more capable platforms, taking their data with them. Apple chose technological competitiveness over ideological purity, but structured the deal to preserve privacy protections.

What Google Actually Gets From This Deal

Google's motivation extends beyond the immediate billion-dollar payment. The partnership legitimizes Google's AI technology as enterprise-grade and privacy-compatible, opening doors to similar deals with other privacy-conscious companies.

More strategically, Google gains influence over Apple's AI development roadmap. While Google can't access user data, it can shape how Apple's AI features evolve. Every Siri improvement, every new Apple Intelligence capability, will build on Gemini's foundation models and architectural decisions.

Google also eliminates a potential competitor. Instead of Apple developing rival AI technology that could threaten Google's dominance, Apple becomes a customer dependent on Google's continued innovation. It's the classic "embrace, extend, influence" strategy updated for the AI era.

But Apple isn't naive about this dynamic. The company negotiated strict contractual controls over how Gemini operates within Apple's infrastructure, and maintains the ability to switch AI providers if Google's strategic interests conflict with Apple's user privacy commitments.

The Real Privacy Story Nobody's Talking About

The actual privacy revelation isn't that Apple shares data with Google. It's that Apple's privacy messaging was always more about competitive positioning than absolute data protection.

Apple still collects vast amounts of user data for its own services. Location data for Maps, purchase history for the App Store, health information for Apple Watch features, and behavioral patterns for personalized recommendations all flow through Apple's servers. The company's privacy stance was never "no data collection"—it was "no data sharing with third parties for advertising purposes."

This distinction becomes crucial when analyzing the Gemini partnership. Apple maintains its core privacy principle: user data doesn't leave Apple's control for advertising monetization. Google processes requests within Apple's infrastructure but cannot access, store, or monetize the underlying user information.

"Apple weaponized privacy to be able to enter their competitors' market, all while very openly lying it's all about the user."

Critics argue this proves Apple's privacy stance was always strategic rather than principled. Supporters counter that Apple found a way to advance user experience without compromising core privacy protections. Both perspectives miss the larger point: privacy in the AI era requires new frameworks that balance user protection with technological capability.

What This Means for Your Data

Practically speaking, your Siri interactions remain as private as before. Apple's Private Cloud Compute architecture ensures that even Apple employees can't access your raw voice data or query contents. The addition of Gemini processing doesn't change these fundamental protections.

What changes is Siri's capability. Enhanced natural language understanding, better contextual responses, improved multilingual support, and more sophisticated task completion all become possible through Gemini's advanced language models. You get smarter AI without surveillance trade-offs.

The broader implications extend beyond individual privacy. Apple's approach demonstrates that privacy-preserving AI partnerships are technically feasible and commercially viable. Other companies will likely adopt similar models, potentially shifting the entire industry away from surveillance-based AI development.

However, users should understand that privacy protection increasingly depends on corporate architecture and contractual agreements rather than simple data isolation. Your privacy relies on Apple maintaining its commitment to these protections even as business pressures and competitive dynamics evolve.

The Future of Privacy in an AI World

The Apple-Google partnership signals a fundamental shift in how privacy and AI capability intersect. Instead of treating privacy and performance as opposing forces, companies can structure partnerships that advance both simultaneously.

This model will likely expand across the tech industry. Microsoft partnering with privacy-focused AI providers for Windows features. Meta developing privacy-preserving AI training methods for Instagram and Facebook. Amazon creating secure cloud enclaves for Alexa improvements that protect user data while leveraging external AI capabilities.

The key insight: privacy isn't about avoiding all data processing. It's about controlling who processes your data, how they process it, and what they can do with the results. Apple's Gemini partnership succeeds because it maintains user control while expanding AI capabilities.

Your Siri requests aren't going to Google. They're being processed by Google's technology within Apple's privacy framework. That distinction will define the next generation of AI development across the entire tech industry.