The 6-Gigawatt War: Why Meta's AMD Deal Signals the End of the AI Hype Era

While everyone obsesses over the next ChatGPT, the real battle is happening in data centers where power matters more than prompts.

While tech pundits debate whether Claude beats ChatGPT, Meta just signed a deal that reveals what the AI arms race actually looks like. The company committed to purchasing 6 gigawatts worth of AMD GPUs, enough electricity to power 4.5 million homes. This isn't about building better chatbots. This is about who can actually run AI at the scale that matters.

The deal, announced days after Meta expanded its Nvidia partnership, tells a different story than the headlines suggest. Meta isn't hedging its bets or diversifying for risk management. The company is preparing for an AI infrastructure war where power consumption, not model parameters, determines the winners.

The Infrastructure Reality Check

Six gigawatts represents more than just big numbers on a press release. To put this in perspective, BloombergNEF forecasts total US data center power demand will reach 78 gigawatts by 2035. Meta's single deal with AMD accounts for nearly 8% of that entire projected capacity.

The first gigawatt of AMD chips won't even ship until the second half of 2026, with the full deployment spanning multiple hardware generations. This timeline reveals something crucial: Meta sees AI infrastructure as a decade-long build, not a quarterly sprint to the next model release.

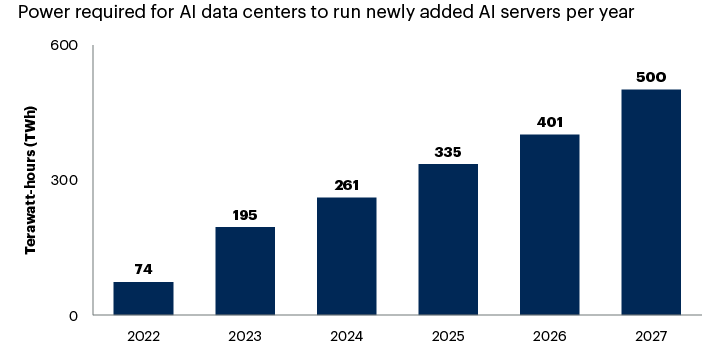

Training GPT-4 required around 30 megawatts of power. OpenAI's Stargate initiative anticipates multi-gigawatt data centers.

The math is staggering. If GPT-4 training consumed 30 megawatts, Meta's 6-gigawatt capacity could theoretically train 200 similar models simultaneously. But that's not the real story. The power isn't going toward training. It's going toward inference, the unglamorous work of actually running AI models for billions of users every day.

Unlike the fragmented consumer markets for electric vehicles or smartphones, AI infrastructure is controlled by a handful of financially powerful tech companies. Meta's deal with AMD isn't just procurement. It's market positioning.

Beyond the Nvidia Monopoly

Meta's partnership with AMD involves custom GPUs based on the MI450 architecture, a key difference from its Nvidia arrangements. While Nvidia sells relatively standardized chips to the highest bidder, AMD is building custom silicon specifically for Meta's workloads.

This customization matters more than most realize. Generic GPUs excel at training AI models because training requires flexible, general-purpose computation. But inference, running trained models at scale, benefits from specialized hardware optimized for specific tasks.

Ben Bajarin of Creative Strategies noted that customization represents the key differentiator in AMD's approach. While Nvidia dominates the training market with standardized chips, AMD is betting on custom inference solutions.

The deal structure reveals this strategy. AMD will issue Meta 160 million shares of common stock, vesting in tranches tied to hardware delivery milestones. This isn't a typical vendor relationship. It's a strategic partnership where both companies' futures depend on successful execution.

The Power Problem Nobody Talks About

The AI industry faces a supply chain crisis, but it's not the GPU shortage everyone discusses. The real constraint is power infrastructure.

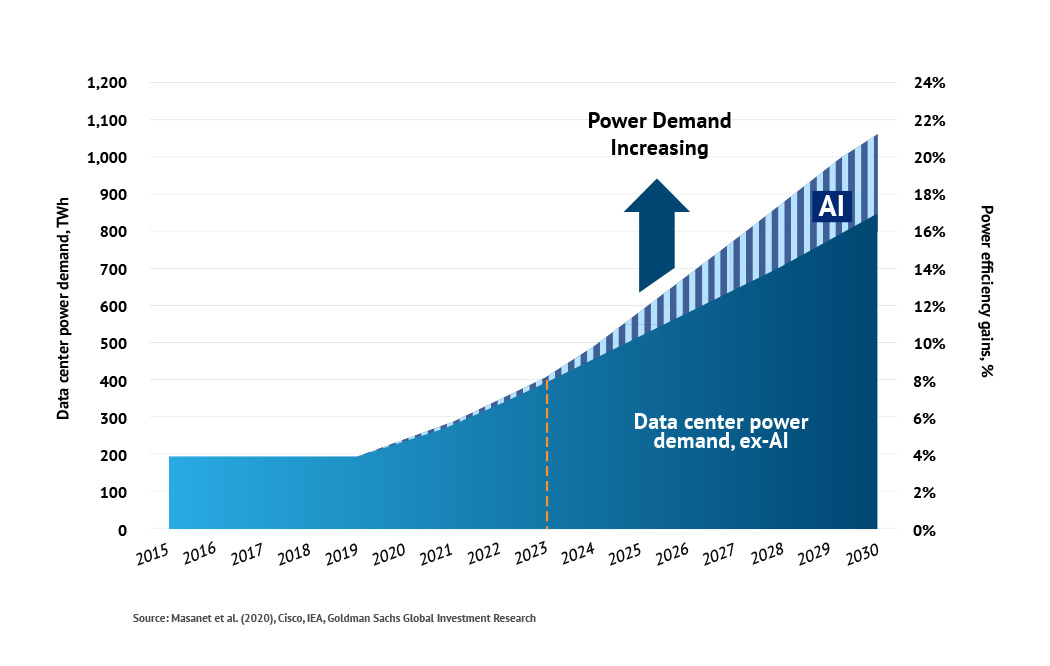

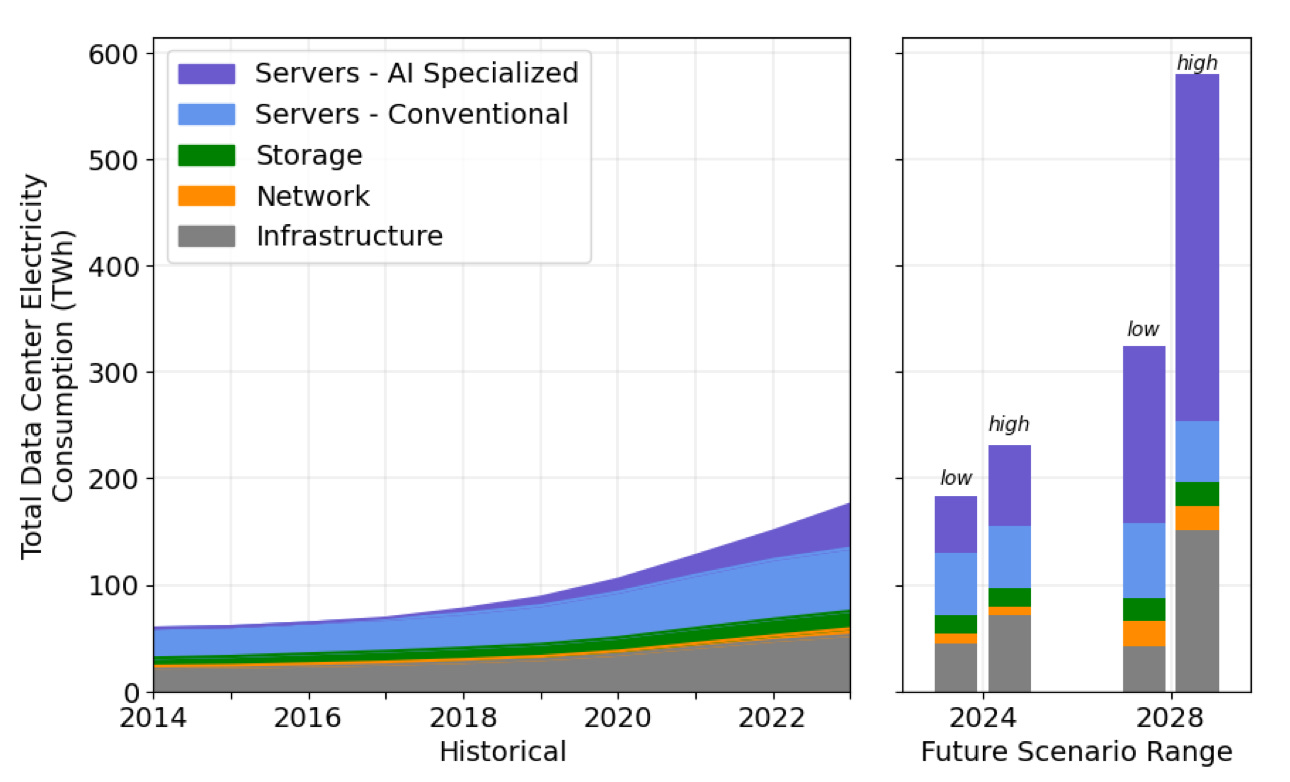

US data centers consumed approximately 35 gigawatts in 2024. That number will more than double by 2035, with AI workloads driving most of the growth. China and the United States account for nearly 80% of global data center electricity consumption growth through 2030.

In the United States, data centers will account for almost half of electricity demand growth through 2030. This isn't sustainable with current grid infrastructure.

Meta's 6-gigawatt deal represents roughly 17% of current US data center capacity. The company isn't just buying chips. It's claiming a massive stake in future electrical grid capacity before competitors can secure it.

The timing matters. Grid infrastructure takes years to build, while AI demand is growing exponentially. Companies that secure power capacity today will have insurmountable advantages over those who wait.

The Real AI Arms Race

While startups fight over model benchmarks and chatbot features, Meta is playing a different game entirely. The company operates at scales where infrastructure constraints become existential challenges.

Facebook serves 3.07 billion monthly active users. Instagram adds another 2 billion. WhatsApp reaches 2.78 billion people. Every AI feature Meta deploys must work for billions of users simultaneously, not thousands of beta testers.

This scale changes everything. A chatbot that works for 10,000 users might collapse under 10 million. A recommendation algorithm that seems fast for early adopters could become unusably slow at Facebook's scale.

Meta's dual partnership with Nvidia and AMD reflects this reality. The company needs multiple suppliers, custom solutions, and massive redundancy. Single points of failure don't exist at Meta's scale.

The average number of AI models in production across industries has grown exponentially, but most companies still think in terms of individual models rather than AI infrastructure platforms.

Most companies approach AI like they approached websites in 1995: one project at a time. Meta approaches AI like Amazon approaches cloud infrastructure: as a platform that must scale to serve arbitrary demand.

What This Means for Everyone Else

Meta's AMD deal signals the end of AI's experimental phase. The technology is moving from research labs to production infrastructure at unprecedented scale and speed.

For other tech companies, this creates a stark choice. Invest in AI infrastructure now, while capacity exists, or accept permanent disadvantage in markets where AI becomes table stakes.

For investors, the lesson is clear: AI infrastructure matters more than AI applications. The companies building the pipes will capture more value than those building the faucets.

The deal also reveals something uncomfortable about AI's future. The technology won't democratize intelligence. It will concentrate it among companies with sufficient capital and infrastructure to operate at gigawatt scale.

Meta's 6-gigawatt commitment isn't just about running better AI models. It's about securing the infrastructure foundation for the next decade of computing. While competitors debate model architectures and training techniques, Meta is claiming the electrical grid capacity that will determine who can actually deploy AI at scale.

The age of AI experimentation is ending. The age of AI infrastructure has begun. And in that war, watts matter more than words.